Una red neuronal es un algoritmo que imita el funcionamiento de las neuronas y de las conexiones que hay entre ellas y son entrenadas para que tengan la capacidad de desempeñar una tarea. Se dice que una red neuronal aprende mediante el entrenamiento porque no hay una programación explícita para realizar una tarea, sino que la red se programa sola a partir de ejemplos. Las redes neuronales son el mayor exponente del llamado machine learning o aprendizaje automático.

Las redes neuronales pueden aprender a clasificar y a imitar el comportamiento de sistemas complejos. Si queremos que aprenda a diferenciar entre manzanas y naranjas sólo tenemos que mostrarle unos cuantos ejemplares de ambas frutas y decirle, a la vez, si se trata de una manzana o de una naranja. Una vez entrenada la red neuronal sabrá si está ante una manzana o una naranja. Lo interesante es que lo sabrá aunque las manzanas y naranjas no sean las que se le enseñaron durante el entrenamiento ya que las redes neuronales no memorizan, sino que generalizan. Esa es la clave del aprendizaje de las máquinas.

El interés en las redes neuronales decayó en el cambio de siglo. Por un lado, el mundo empresarial no había visto satisfechas todas sus expectativas y, por otro lado, el mundo académico se centró en algoritmos más prometedores. Sin embargo, algunos investigadores, sobre todo en torno a la Universidad de Montreal, perseveraron en el estudio de las redes neuronales y las hicieron evolucionar hasta lo que llamaron Deep Learning.

El Deep Learning es una serie de algoritmos emparentados con las redes neuronales que tienen la misma finalidad y un rendimiento mayor que otras formas de Machine Learning. La mayor diferencia es la capacidad de abstracción. Volviendo al ejemplo anterior, para clasificar naranjas y manzanas con una red neuronal es necesario extraer características que definan las frutas. Estas características pueden ser el color, la forma, el tamaño, etc. Representar las frutas mediante estas características es una forma de abstracción que debe ser diseñada por la persona que entrene la red neuronal. Pues bien, los algoritmos Deep learning son capaces de realizar una abstracción semejante por sí mismos, sin necesidad de que alguien la diseñe previamente. Por esta razón se dice que el Deep Learning no sólo es capaz de aprender, sino que, además, puede encontrar significado.

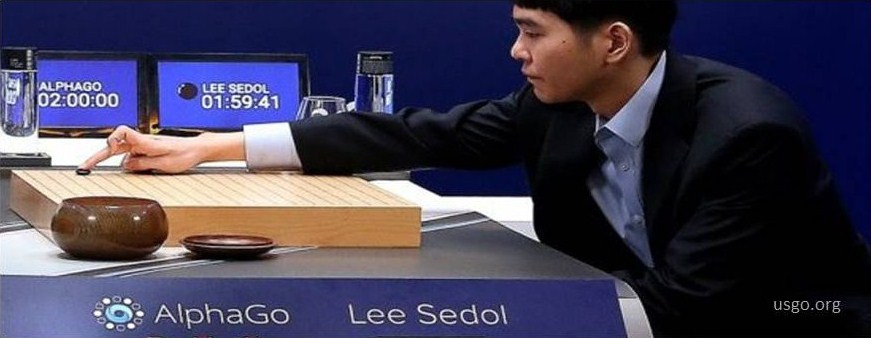

El Deep Learning ha aparecido en los medios de comunicación por el interés que han puesto en él grandes empresas y también por la espectacularidad de sus logros tecnológicos. A principios de 2016, los medios dieron noticia de cómo el programa AlphaGo de la empresa Google DeepMind ganó al campeón de go Lee Sedol. Esto ha sido un logro técnico sin precedentes, puesto que la estrategia seguida con el ajedrez no puede usarse con el go. Cuando Garry Kasparov perdió al ajedrez en 1996 lo hizo frente a una máquina, la Deep Blue de IBM, programada para calcular todos los posibles movimientos futuros del contrincante. Por el contrario, la máquina de Google DeepMind no está programada para jugar al go, sino que fue enseñada a jugar al go antes de enfrentarse a Lee Sedol. Primero aprendió jugando con el campeón europeo de go y después contra otra versión de sí misma. Partida a partida, la máquina fue mejorando su juego hasta hacerse imbatible.

Grandes empresas como Google o Facebook usan Deep Learning de manera rutinaria en sus productos para reconocer caras y para interpretar el lenguaje natural. También hay empresas pequeñas que ofrecen productos basados en esta tecnología, como Artelnics o Numenta, que pueden aplicarse en muchos procesos industriales. Es de esperar un gran desarrollo de aplicaciones basadas en Deep Learning debido a la necesidad de automatizar el tratamiento inteligente de las enormes cantidades de datos que se generan a diario y, además, porque hay una serie de herramientas open source que ponen estos algoritmos al alcance de todos, como Theano, TensorFlow, H2O u OpenAI Gym .

El éxito de las aplicaciones industriales del Deep Learning dependerá de la disponibilidad de grandes cantidades de datos de calidad, de los recursos de computación disponibles y de su aplicación a problemas apropiados. La detección y clasificación de defectos o averías, el modelado de sistemas para su control y la detección de anomalías podrían ser las primeras aplicaciones prácticas exitosas.

- El apagón del 28-A y las lecciones pendientes de la transición energética - 23 May 2025

- Nos quedamos sin luz - 5 April 2024

- Incertidumbres en el suministro de energía eléctrica - 15 December 2023